Repetition makes a fact seem more true, regardless of whether it is or not. Understanding this effect can help you avoid falling for propaganda, says psychologist Tom Stafford.

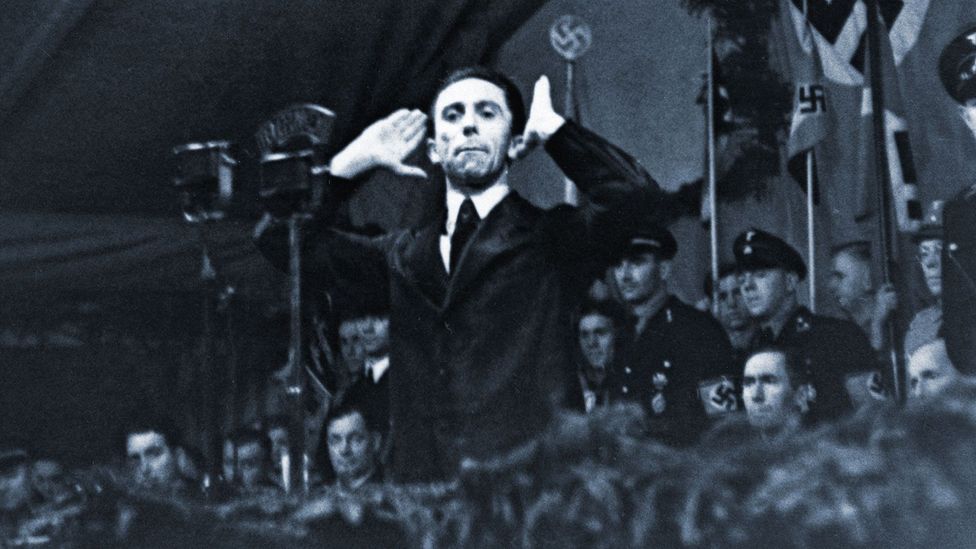

“Repeat a lie often enough and it becomes the truth”, is a law of propaganda often attributed to the Nazi Joseph Goebbels. Among psychologists something like this known as the “illusion of truth” effect. Here’s how a on the effect works: participants rate how true trivia items are, things like “A prune is a dried plum”. Sometimes these items are true (like that one), but sometimes participants see a parallel version which isn’t true (something like “A date is a dried plum”).

After a break – of minutes or even weeks – the participants do the procedure again, but this time some of the items they rate are new, and some they saw before in the first phase. The key finding is that people tend to rate items they’ve seen before as more likely to be true, regardless of whether they are true or not, and seemingly for the sole reason that they are more familiar.

So, here, captured in the lab, seems to be the source for the saying that if you repeat a lie often enough it becomes the truth. And if you look around yourself, you may start to think that everyone from advertisers to politicians are taking advantage of this foible of human psychology.

But a reliable effect in the lab isn’t necessarily an important effect on people’s real-world beliefs. If you really could make a lie sound true by repetition, there’d be no need for all the other techniques of persuasion.

Story continues below

The ‘illusion of truth’ can be a dangerous weapon in the hands of a propagandist like Joseph Goebbels (Credit: Getty Images)

One obstacle is what you already know. Even if a lie sounds plausible, why would you set what you know aside just because you heard the lie repeatedly?

Recently, a team led by Lisa Fazio of Vanderbilt University set out to test how the illusion of truth effect interacts with our prior knowledge. Would it affect our existing knowledge? They used paired true and un-true statements, but also split their items according to how likely participants were to know the truth (so “The Pacific Ocean is the largest ocean on Earth” is an example of a “known” items, which also happens to be true, and “The Atlantic Ocean is the largest ocean on Earth” is an un-true item, for which people are likely to know the actual truth).

Their results show that the illusion of truth effect worked just as strongly for known as for unknown items, suggesting that prior knowledge won’t prevent repetition from swaying our judgements of plausibility.

To cover all bases, the researchers performed one study in which the participants were asked to rate how true each statement seemed on a six-point scale, and one where they just categorised each fact as “true” or “false”. Repetition pushed the average item up the six-point scale, and increased the odds that a statement would be categorised as true. For statements that were actually fact or fiction, known or unknown, repetition made them all seem more believable.

Repetition can even make known lies sound more believable (Credit: Alamy)

At first this looks like bad news for human rationality, but – and I can’t emphasise this strongly enough – when interpreting psychological science, you have to look at the actual numbers.

What Fazio and colleagues actually found, is that the biggest influence on whether a statement was judged to be true was… whether it actually was true. The repetition effect couldn’t mask the truth. With or without repetition, people were still more likely to believe the actual facts as opposed to the lies.

This shows something fundamental about how we update our beliefs – repetition has a power to make things sound more true, even when we know differently, but it doesn’t over-ride that knowledge

The next question has to be, why might that be? The answer is to do with the effort it takes to being rigidly logical about every piece of information you hear. If every time you heard something you assessed it against everything you already knew, you’d still be thinking about breakfast at supper-time. Because we need to make quick judgements, we adopt shortcuts – heuristics which are right more often than wrong. Relying on how often you’ve heard something to judge how truthful something feels is just one strategy. Any universe where truth gets repeated more often than lies, even if only 51% vs 49% will be one where this is a quick and dirty rule for judging facts.

The illusion of truth is not inevitable – when armed with knowledge, we can resist it (Credit: Getty Images)

If repetition was the only thing that influenced what we believed we’d be in trouble, but it isn’t. We can all bring to bear more extensive powers of reasoning, but we need to recognise they are a limited resource. Our minds are prey to the illusion of truth effect because our instinct is to use short-cuts in judging how plausible something is. Often this works. Sometimes it is misleading.

Once we know about the effect we can guard against it. Part of this is double-checking why we believe what we do – if something sounds plausible is it because it really is true, or have we just been told that repeatedly? This is why scholars are so mad about providing references – so we can track the origin on any claim, rather than having to take it on faith.

But part of guarding against the illusion is the obligation it puts on us to stop repeating falsehoods. We live in a world where the facts matter, and should matter. If you repeat things without bothering to check if they are true, you are helping to make a world where lies and truth are easier to confuse. So, please, think before you repeat.

—

Tom Stafford’s ebook on when and how rational argument can change minds is out now. If you have an everyday psychological phenomenon you’d like to see written about in these columns please get in touch with @tomstafford on Twitter, or ideas@idiolect.org.uk.